First in this series, I want to address the simplest and most important question to ask about a machine learning start-up or application:

Question: Is there existing training data? If not, how do they plan on getting it?

To sufficiently understand the answers to this question, you have to understand what training data is and, from there, what tasks or ideas would be extremely difficult to capture within training data. I’ll be addressing those in this post.

Most useful AI applications require training data: examples of the phenomenon they’re trying to replicate with the computer. If some start-up or group proposes a solution to a problem and they don’t have training data, you should be much more skeptical of their proposed solution; it’s now meandering into magic and/or expensive.

I like to think of training data as artificial intelligence’s dirty secret. It never gets mentioned in the press, but it is the topic of Day 1 of any Machine Learning class and forms the theoretical basis for what you learn the rest of the semester. Techniques like these that use training data are called often statistical methods, since they gather statistics about the data they’re provided to make predictions; this is in contrast to the rule-drive methods that were used prior to this.

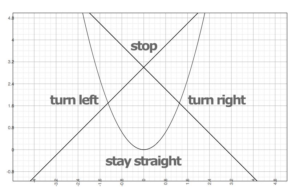

For example, if you want a car that drives itself, you don’t write code that, in detail, define what a road looks like in digital image, then what a car looks like, etc. Instead, you take hours of camera and sensor readings (inputs) and steering wheel / pedal states (output) from people driving, then use those examples to learn how a car is driven. In machine learning terms, these input/output pairs allow us to create a model that predicts output based on input. In other words, the entirety of machine learning is how to learn a function—like you talked about in algebra—between the input and output of any data set.

In natural language processing, the same applies for software that analyzes sentences for their syntactic or rhetorical structure. The input is a sequence of word tokens; the output is some linguistic analysis of the data: sentence structure, rhetorical structure, salient entities, document category, etc.

If someone proposes a software idea to you, think about the task at hand, and consider the idea that machine learning involves learning a function. Can the thing they want the computer to do be reliably modeled or approximated as a function between some input and some output? If it can, is there an existing repository of such training data?

A good example is Google’s self-driving cars—these didn’t pop up out of an engineer’s imagination one day. Remember Google Street View? For years before self-driving cars were even on the radar for possible commercial application, Google was collecting training data using street view cars; this required millions of dollars on capital and labor to actually get that data to start making cars that drive themselves. They strove to drive down every street on the planet and record gold standard data of every mile of road. When you have an example of every possible drive, much of the guess work is removed.

While training data is often expensive to acquire, that’s not always the case. There are clever ways a product can be devised that allow you to get training data from users, but that always has a dangerous Catch 22: the product starts out useless. How will you attract users to a product that doesn’t do anything? Like an empty social network, the AI product without any training data—but requires training data to operate—has no practical use.

One thing to watch out for too: training data needs to be relevant. Having any data is not enough to qualify as training data. For example, if someone wants to build a chatbot and they tell you data that isn’t people actually having a conversation, then they don’t have training data.

Similarly, someone might tell you that they have WordNet or another ontological resource lying around and that this is their training data. If their goal is to classify words, ok. If their goal is to build a chatbot from WordNet, then they don’t have training data (and don’t really know what they’re doing).

And chatbots are just the start, what about someone trying to replace doctors? Where do you find doctor training data? Medical textbooks and Wikipedia don’t count; while a lot of the information doctors have to memorize in med school are in those texts, the actual act of diagnosing and treating a patient is not cataloged there. That is learned through doing. Do you want a robot with sharp tools collecting “oops killed patient” data on you? I didn’t think so.

The same goes for lawyers too—while the law is formal and involves formal procedures, much of the practice of law is about understanding nuance, about finding ways to apply the details of a case to a formal system with qualitative underpinnings. In a court room, it’s about using that nuance to convince a jury, which not just involves argumentation but charisma and rhetorical skill. There is no training data for any of this.

What about CEOs? There was an article floating around recently that proposed replacing CEOs with software. How do you get CEO training data? You can’t. Their solution:

“In general when you pit professional judgments – even expert judgment – against formulas, algorithms, simple rules that combine information, in general the rules beat the experts,”

There are a lot of things wrong with this sentence. What algorithm chooses what formulae are relevant? What algorithm synthesizes conflicting information, builds strategic vision, and conveys that to a human workforce? What algorithm negotiates a multinational supply chain with suppliers across linguistic boundaries? What algorithm chooses who gets fired?

Not to mention, the proposed method of devising AI isn’t even the state of the art. This is how people did things 30 years ago. Statistical methods perform better than hand-crafted rules, even in their own bizarre ways, and for far cheaper. They can handle subtle complexity much better than a human constructing elegant rules, which is always a house of cards. If you have training data, statistical methods can be applied much more meaningfully. If you don’t, you’re working in the dark.

tl;dr: training data is a set of observations that can be used to learn a function between inputs and outputs and constitutes the foundation of machine learning. Not all tasks can yet be captured in such a way.

Of course, training data is not always required, but employing unsupervised techniques—ones without training data—is risky. Such applications aren’t impossible, but you should be highly skeptical when you encounter one.

One way to mitigate such skepticism is to evaluate your results systematically. After all, if you can show your components work without training data, then you don’t have to worry about lacking training data in the first place. However, that evaluation has to be done right, or else it’s garbage. I’ll be talking about evaluation in the next post.